Why List Crawling is the Data Advantage Real Estate Professionals Need

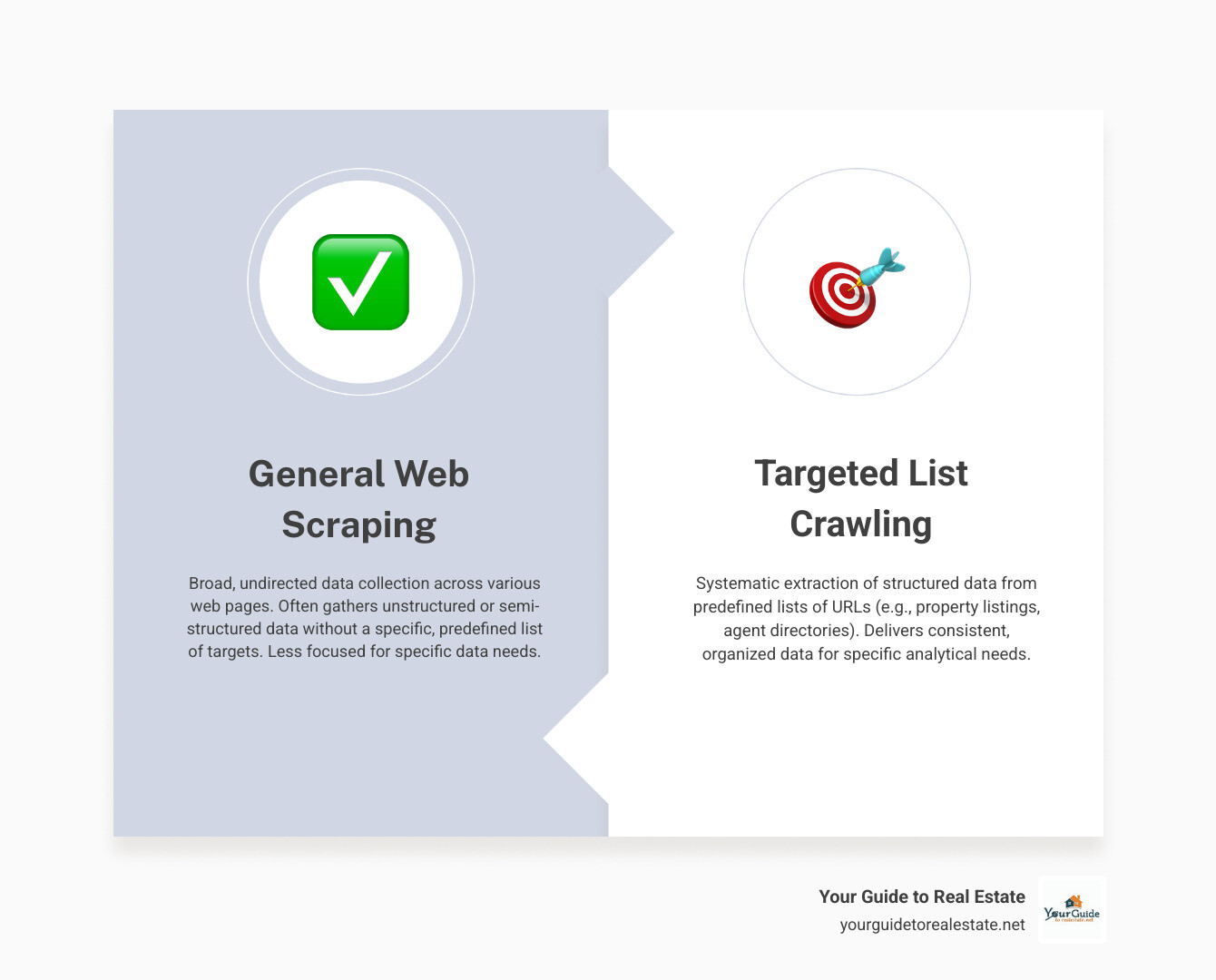

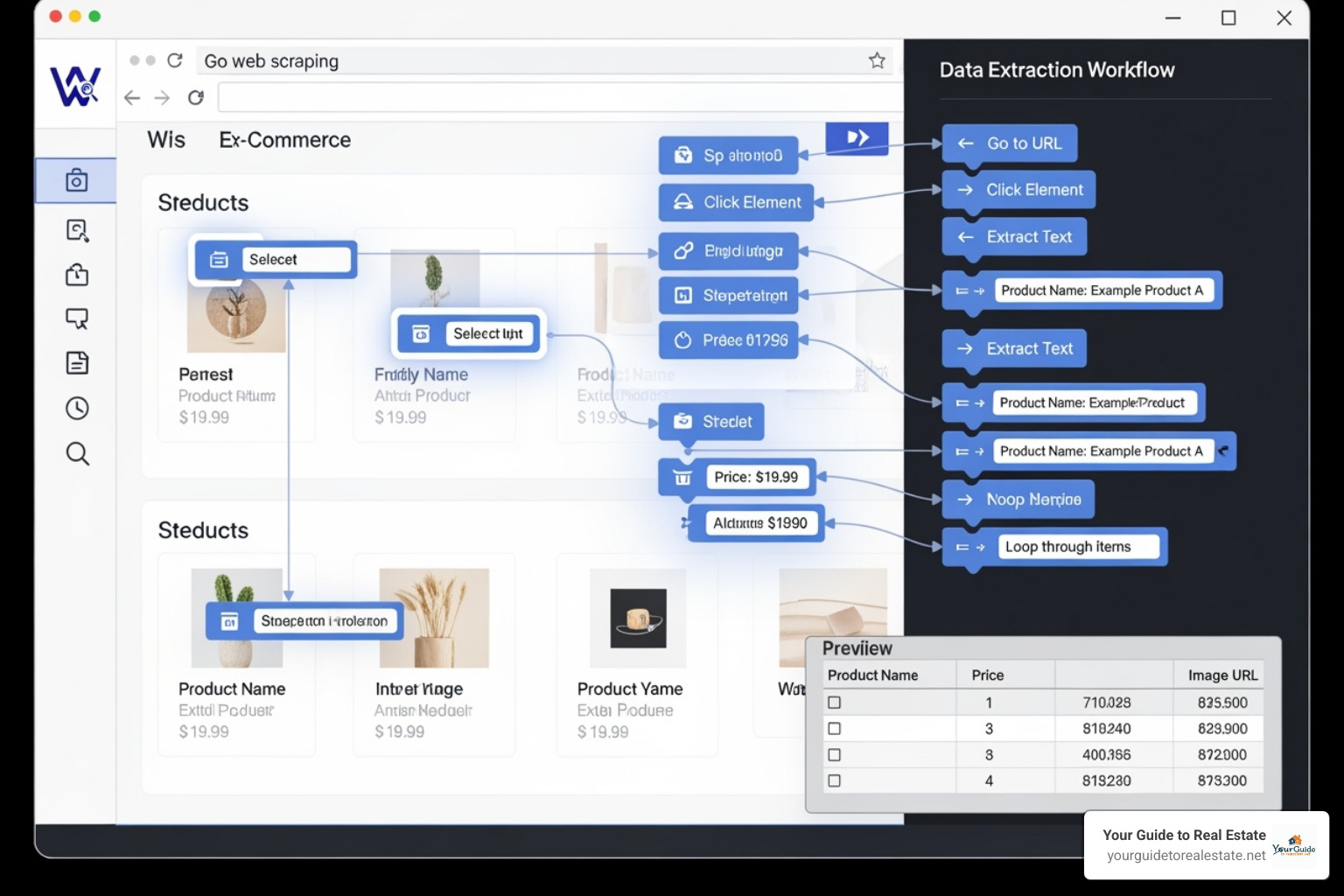

List crawling is a specialized web scraping technique that automatically extracts structured data from predefined lists of websites – think property listings, competitor sites, or market databases. Unlike general web scraping that wanders across random pages, list crawling targets specific URLs to gather consistent, organized information.

What List Crawling Does:

- Extracts structured data from multiple real estate websites simultaneously

- Automates data collection from property listings, agent profiles, and market reports

- Organizes information into usable formats like spreadsheets or databases

- Saves hours of manual copy-paste work across dozens of sites

- Provides real-time insights on pricing, inventory, and market trends

One real estate professional shared: “List crawling saved me hours of manual copy-paste! I can quickly extract job listings and contacts from any site.” This captures exactly why list crawling has become essential for modern real estate operations.

The difference between traditional web scraping and list crawling is like the difference between wandering through a library randomly versus having a specific reading list. List crawling focuses on structured data from lists – paginated property results, agent directories, or market comparison tables. This targeted approach makes it perfect for real estate professionals who need consistent, reliable data from multiple sources.

For real estate professionals navigating today’s competitive market, list crawling offers a significant advantage. Whether you’re tracking competitor pricing, building lead lists, or monitoring market trends, this automated approach delivers the structured data you need to make informed decisions quickly.

What is List Crawling and How Does it Work?

Think of list crawling as having a super-organized assistant who never gets tired. While regular web scraping is like sending someone to wander around a shopping mall collecting random information, list crawling is much more focused. It’s designed specifically to extract structured data from lists – exactly what you need when you’re dealing with property listings, agent directories, or market reports.

Here’s what makes list crawling so powerful for real estate professionals: it targets specific types of content. Whether you’re looking at paginated search results (those “Next Page” buttons on listing sites), infinite scroll feeds that load more properties as you scroll, or data tables with market comparisons, list crawling handles them all with precision.

The beauty lies in its automation benefits. Instead of spending your Saturday morning copying and pasting property details from multiple websites, you can set up a list crawler to do it while you grab coffee. This means saving time you can spend with clients and achieving much better accuracy than manual data entry.

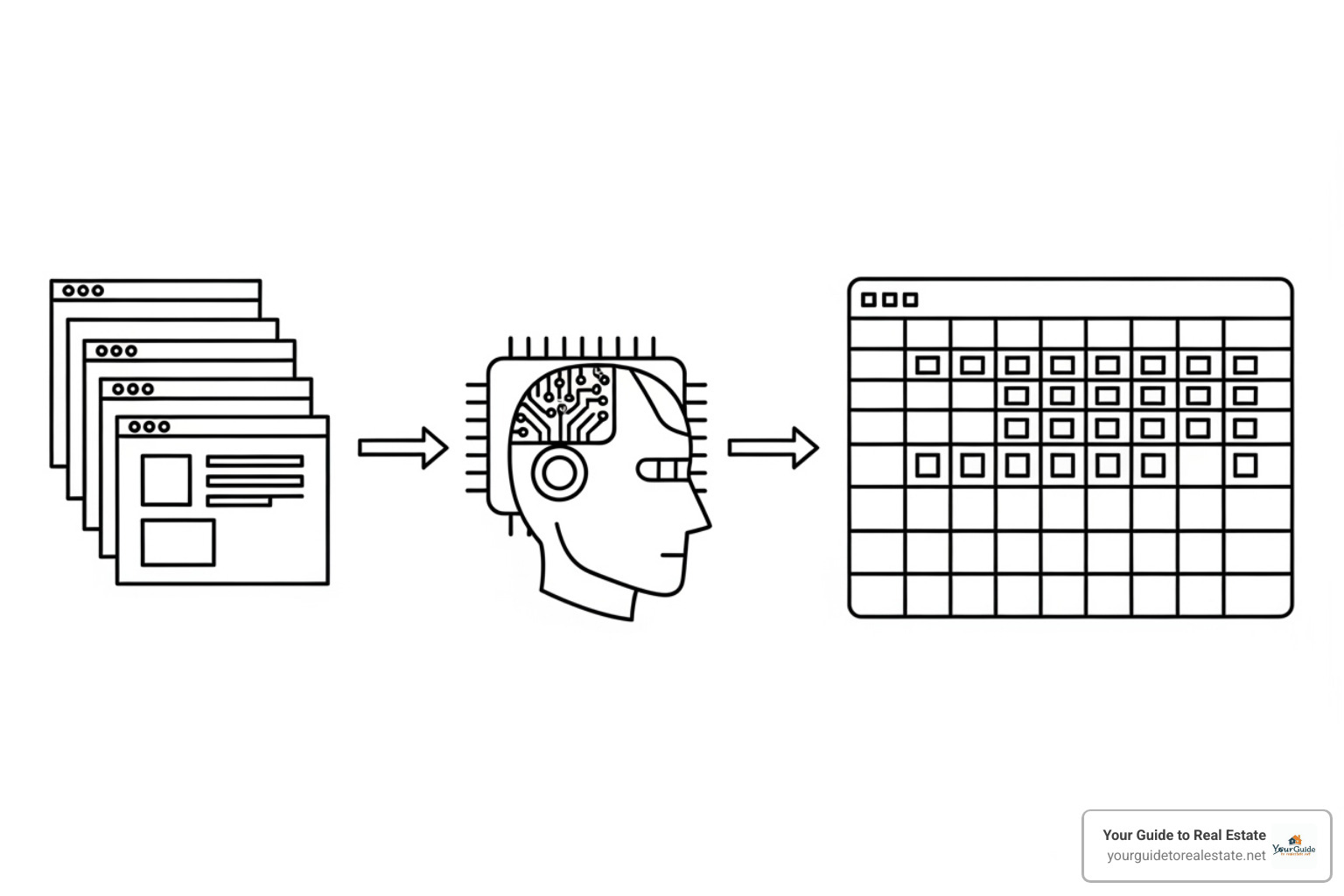

The Core Steps of the Process

Getting started with list crawling follows a straightforward path that anyone can understand.

Preparing a URL list comes first. This is where you gather the web addresses of all the pages containing the data you want. Maybe it’s every property listing page in your target neighborhood, or competitor agent profiles across different brokerages. You can create this list manually for smaller projects or use automated tools for larger data collection efforts.

Next comes configuring the crawler. This step is like teaching your assistant exactly what to look for and ignore. You set up rules telling the crawler to grab property prices but skip the website’s navigation menu, or to collect agent contact details while ignoring advertisement banners. The crawler learns the structure of each website and identifies your specific data points.

Running the crawl is where the magic happens. Your configured crawler visits each URL on your list, reads the webpage content, and applies your extraction rules. It works systematically through your entire list, pulling out exactly the information you specified. This process can handle hundreds or thousands of pages without breaking a sweat.

Finally, analyzing the data transforms your raw information into something useful. The crawler saves everything in structured formats like CSV files for Excel analysis, or directly into databases for more advanced reporting. Instead of scattered notes and bookmarks, you get clean, organized data ready for immediate analysis.

Key Benefits for Your Business

The impact of list crawling on your real estate business goes far beyond simple convenience.

Time savings represent the most immediate benefit. One real estate professional put it perfectly: “List Crawling saved me hours of manual copy-paste!” What used to take entire afternoons now happens automatically in minutes. This freed-up time lets you focus on what really matters – building relationships with clients and closing deals.

Reduced human error makes your data much more reliable. When you’re manually copying property details at 11 PM after a long day of showings, mistakes happen. Automated list crawling eliminates those typos and missed fields that can throw off your market analysis.

Scalability means your data collection grows with your business. Whether you’re tracking 50 properties in one neighborhood or 5,000 listings across multiple markets, list crawling handles the workload without additional effort from you.

Real-time data keeps you ahead of market changes. Set up your crawler to check competitor listings every morning, and you’ll know about price drops or new inventory before other agents even log into their systems.

Most importantly, you’re gaining a competitive edge through better information. While other agents rely on outdated spreadsheets or manual research, you have fresh, comprehensive data driving your decisions. This advantage compounds over time, helping you identify opportunities others miss.

For more strategies on leveraging data and systems in your real estate business, explore our comprehensive guide on real estate business growth.

How List Crawling Revolutionizes Real Estate Strategy

In today’s real estate market, having the right information at the right time can make or break a deal. List crawling has become the secret weapon that transforms how real estate professionals gather insights and make strategic decisions.

Picture this: instead of spending hours manually checking dozens of websites for property updates, you can automatically gather thousands of listings, pricing changes, and market trends in minutes. That’s the power list crawling brings to your real estate strategy.

Competitive analysis becomes effortless when you can automatically track what other agents and brokerages are doing. You’ll see their pricing strategies, find which properties they’re pushing, and understand their marketing approaches – all without manually visiting each site.

Lead generation gets a major boost too. List crawling can extract contact information from business directories and professional networks (always respecting privacy rules, of course). This means you can build targeted prospect lists much faster than traditional methods.

For market research, this technology shines brightest. You can automatically collect data from multiple sources to spot emerging neighborhood trends, understand buyer preferences, and identify the best investment opportunities. It’s like having a research assistant that never sleeps.

Monitoring property listings becomes automatic rather than tedious. Set up your crawler once, and it will continuously watch for new listings, price changes, or status updates across all your target areas. You’ll be the first to know when opportunities arise.

Tracking price changes gives you a competitive edge in negotiations. When you have historical pricing data at your fingertips, you can make stronger offers and provide better guidance to clients.

For deeper insights into leveraging market data effectively, check out our guide on Using data for a competitive market analysis.

Finding and Analyzing Investment Properties

List crawling transforms property investment from guesswork into a data-driven strategy. Instead of relying on gut feelings or limited information, you get comprehensive market intelligence.

Aggregating listings from multiple sources gives you the complete picture. Rather than checking individual MLS systems or real estate portals one by one, your crawler compiles everything into a single, organized dataset. This saves hours and ensures you never miss potential opportunities.

Identifying undervalued properties becomes much easier with comprehensive data. When you can quickly compare thousands of properties across different platforms, patterns emerge. You’ll spot homes priced below market value, properties that have been sitting too long, or areas where prices haven’t caught up to their true potential.

Tracking market trends happens automatically instead of manually. Your list crawling system can monitor key indicators like average sale prices, inventory levels, and how long properties stay on the market. This real-time insight helps you time your investments perfectly and predict where the market is heading.

The data you collect becomes your competitive advantage. While other investors are still manually researching properties, you’re already analyzing trends and making offers on the best opportunities.

Ready to dive deeper into investment strategies? Our comprehensive guide on Learn how to invest in real estate will help you put this data to work.

Enhancing Your Website’s SEO

List crawling isn’t just for property data – it’s a powerful tool for boosting your online presence and attracting more clients through better search rankings.

Monitoring competitor keywords gives you insight into what’s working in your market. By crawling other real estate websites, you can find which keywords they rank for, analyze their content strategies, and identify gaps in your own SEO approach.

Finding backlink opportunities becomes systematic rather than random. Your crawler can identify websites that link to other real estate professionals but haven’t finded your site yet. These represent golden opportunities to build your domain authority and improve search rankings.

Identifying content gaps helps you create more valuable resources for potential clients. When you see what topics other professionals are covering (or missing), you can develop content that truly serves your audience’s needs and ranks well in search results.

Tracking SERP rankings keeps you informed about your SEO progress. Instead of manually checking where you rank for important keywords, list crawling can monitor your positions automatically and alert you to changes. This real-time feedback helps you adjust your strategy quickly when needed.

The beauty of using list crawling for SEO is that it turns optimization from a guessing game into a strategic advantage. You’ll know exactly what’s working, what isn’t, and where your biggest opportunities lie.

Getting Started: Tools and Techniques

Ready to jump into list crawling? Here’s some great news: you don’t need to be a coding genius to get started. There’s a wonderful world of tools out there, each designed for different skill levels and project needs.

If you’re just starting out or prefer a more visual approach, no-code tools are your best friend. Think of tools like Octoparse, ParseHub, Data Miner, WebHarvy, Import.io, Helium Scraper, and UiPath. These platforms offer friendly drag-and-drop interfaces that make list crawling feel almost like playing with digital building blocks. You simply point and click on the data you want, and these tools figure out how to extract it. No programming required!

For those who love getting their hands dirty with code, developer frameworks open up a world of possibilities. Python is particularly popular here because it’s both powerful and relatively easy to learn. Libraries like requests help you talk to websites, while BeautifulSoup makes sense of all that messy HTML code. For more complex sites that rely heavily on JavaScript, Playwright can automate entire browser sessions.

When you need serious firepower for large-scale projects, Scrapy framework for developers is the heavy-duty option. It’s built for speed and can handle massive list crawling operations with grace.

| Feature | No-Code Tools (e.g., Octoparse, ParseHub) | Developer Frameworks (e.g., Scrapy, Python with Beautiful Soup) |

|---|---|---|

| Ease of Use | High (visual interface, drag-and-drop) | Low to Medium (requires coding knowledge) |

| Coding Required | None to Minimal | Yes (Python, JavaScript, etc.) |

| Flexibility | Limited (pre-built features, templates) | High (fully customizable scripts) |

| Scalability | Good for medium projects, cloud-based options | Excellent for large-scale, complex projects |

| Cost | Often subscription-based (free tiers available) | Free (open-source libraries), but development time/expertise is a cost |

| Best For | Beginners, quick projects, non-technical users | Developers, complex sites, high-volume data extraction, unique requirements |

Handling Different Types of Web Content

Here’s where things get interesting. The web is like a giant, messy library where every book is organized differently. Your list crawling success depends on knowing how to read each type of “book.”

Paginated lists are probably the most common challenge you’ll face. You know those property search results that show “Page 1 of 47”? Your crawler needs to be smart enough to click through all those pages automatically. Most good tools can detect those “Next” buttons and follow them until they’ve gathered everything.

Infinite scroll sites are trickier. These are the ones where new listings just keep appearing as you scroll down, like magic. Social media feeds work this way, and so do some modern real estate sites. For these, you need tools that can actually “scroll” the page and wait for new content to load. It’s like having a very patient robot finger doing all the scrolling for you.

Dynamic content powered by JavaScript can be the trickiest of all. Many modern websites don’t show their full content until JavaScript runs in your browser. Traditional list crawling tools might miss this entirely, like trying to read a pop-up book with your eyes closed. That’s where browser automation tools like Selenium come in handy – they actually open a real browser and interact with the page just like you would.

Data tables are usually the easiest to work with. When information is neatly organized in rows and columns, most list crawling tools can extract it beautifully. It’s like having data served to you on a silver platter.

This variety in web content is part of how technology is revolutionizing real estate – making more information accessible than ever before, once you know how to get to it.

Advanced Techniques for Effective List Crawling

Once you’ve got the basics down, there are some clever tricks that can make your list crawling much more effective and less likely to run into problems.

Using proxies is like having multiple postal addresses. When a website gets suspicious about too many requests from the same location, proxies let you appear to be coming from different places. It’s perfectly legitimate for research purposes and helps keep your crawling smooth.

Rotating user agents means your crawler can pretend to be different browsers. One minute it’s Chrome on Windows, the next it’s Safari on Mac. This variety helps avoid detection patterns that websites might be watching for.

Handling CAPTCHAs is probably the most frustrating part of list crawling. These “prove you’re human” tests are specifically designed to stop automation. While some services can help solve them, the best approach is often to crawl so respectfully that you never trigger them in the first place.

Smart scheduling is all about being a good neighbor. Running your crawls during off-peak hours means you’re not competing with regular users for the website’s attention. Think of it like doing your grocery shopping at 2 AM – everything goes smoother when there’s less traffic.

The key to all these advanced techniques is patience and respect. The websites you’re crawling are someone’s business, and treating them gently ensures everyone benefits from the wealth of information available online.

Common Challenges and Ethical Best Practices

Let’s be honest – list crawling isn’t always smooth sailing. Even with the best tools and intentions, you’ll likely bump into some roadblocks along the way. The good news? Most challenges are manageable when you know what to expect.

Website layout changes are probably the most frustrating challenge you’ll face. Just when you’ve got your list crawling setup running perfectly, a website decides to redesign their property listing pages. Suddenly, your carefully configured crawler can’t find the data it’s looking for. It’s like showing up to your favorite restaurant only to find they’ve completely changed the menu.

Anti-scraping measures have become increasingly sophisticated. Many real estate websites now use rate limiting, CAPTCHAs, and bot detection systems. These aren’t personal attacks on your data collection efforts – they’re protective measures websites use to manage server load and prevent abuse.

IP blocking happens when websites detect too many requests coming from your address in a short time. Think of it as a digital bouncer saying “slow down there, friend.” This is especially common when you’re eager to gather data quickly from multiple property sites.

The trickier challenges involve legal considerations and data privacy. Real estate data often contains sensitive information, and collecting it requires careful attention to website terms of service, privacy policies, and regulations like GDPR and CCPA.

These challenges might seem daunting, but they’re all part of building robust real estate business systems that stand the test of time.

Staying Compliant and Ethical

Here’s where we separate the professionals from the amateurs. Ethical list crawling isn’t just about avoiding legal trouble – it’s about being a good digital citizen while gathering the data you need.

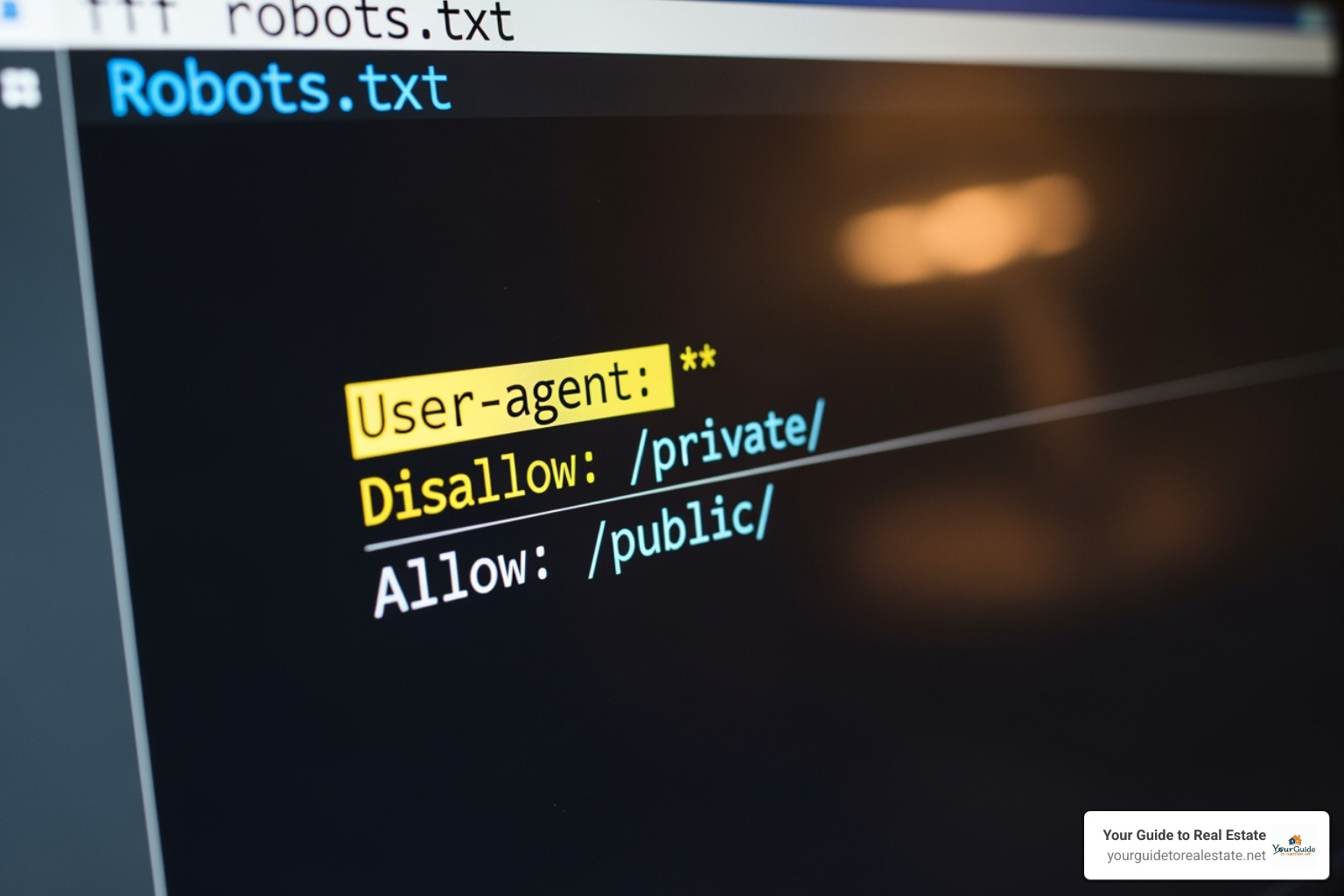

Respecting robots.txt is your first step toward ethical crawling. This small text file is like a property’s “No Soliciting” sign – it tells crawlers which areas of a website are off-limits. Ignoring robots.txt is like walking into someone’s backyard without permission. Not cool, and potentially illegal.

Reading Terms of Service might be about as exciting as reading insurance policies, but it’s crucial. These documents spell out exactly what website owners allow and prohibit. Some real estate sites explicitly forbid automated data collection, while others are more permissive. Knowing the difference keeps you out of hot water.

Avoiding server overload is both ethical and practical. When you bombard a website with rapid-fire requests, you’re essentially creating a traffic jam on their digital highway. This can slow down the site for legitimate users – imagine trying to browse property listings while someone’s crawler is hammering the server. Be patient, space out your requests, and crawl at a reasonable pace.

Responsible data use means treating collected information with respect. Don’t collect personal information without explicit permission. Focus on publicly available data like property prices, listing details, and market statistics. Just because data is accessible doesn’t mean it’s free to use however you want.

GDPR and CCPA basics apply when you’re dealing with data from European users or California residents respectively. These regulations have teeth – violations can result in hefty fines. The key principle is simple: be transparent about what data you collect and why, and give people control over their information.

The bottom line? Successful list crawling requires balancing efficiency with ethics. By following these guidelines, you’ll build sustainable data collection practices that serve your real estate business well without stepping on anyone’s toes. It’s about being smart, respectful, and professional in your approach to gathering market intelligence.

Frequently Asked Questions about List Crawling

When we talk with real estate professionals about list crawling, we notice the same thoughtful questions come up again and again. Let’s tackle the most important ones together.

Is list crawling legal for real estate data?

This is probably the most important question you can ask, and we’re glad you’re thinking about it! The short answer is yes – list crawling is generally legal for real estate data, but there are some important guidelines to follow.

The legality depends on a few key factors. First, you need to respect the website’s terms of service. Think of it like the house rules – every website gets to set their own boundaries about what’s okay and what isn’t. Some sites welcome data collection, while others might restrict it.

Publicly available data is usually fair game. Most real estate information – like listing prices, property features, square footage, and neighborhood details – is meant to be public. After all, sellers want buyers to find their properties! This type of information is typically fine to collect through list crawling.

Personal data requires extra care. While property details are usually public, personal information about agents, sellers, or buyers needs to be handled differently. We always avoid collecting personal data without explicit permission, and we’re especially careful about contact information that isn’t meant for public use.

The key is being respectful and ethical in your approach. When you follow the rules and focus on publicly available real estate data, list crawling becomes a powerful and legal tool for your business.

Do I need to be a programmer to start list crawling?

Here’s some great news – you absolutely don’t need to be a programmer to get started! While coding skills can open up more advanced possibilities, they’re definitely not required for most real estate professionals.

No-code tools make list crawling accessible to everyone. These user-friendly platforms let you point, click, and extract data without writing a single line of code. You can visually select the information you want from a webpage, and the tool handles all the technical work behind the scenes.

Many of these tools offer visual selectors and browser extensions that work right in your web browser. You simply highlight the data you want to collect, and the tool figures out how to extract similar information from other pages. It’s surprisingly intuitive once you try it.

Programming adds flexibility for larger projects. If you do have coding skills or want to learn, languages like Python with libraries such as BeautifulSoup or frameworks like Scrapy give you incredible control and customization options. But for most real estate applications – tracking competitor listings, gathering market data, or building lead lists – the no-code tools work beautifully.

The best part? Many of these tools offer free plans or trials, so you can experiment and see what works for your specific needs before committing to anything.

How can I avoid being blocked by websites?

Getting blocked by websites is frustrating, but it’s completely avoidable when you know the right techniques. Think of it like being a considerate house guest – you want to be helpful without overstaying your welcome.

Rate limiting is your best friend. This means controlling how fast you send requests to a website. Instead of rapid-fire requests that might overwhelm a server, we add polite pauses between each request – usually 1-3 seconds. This mimics how a real person would browse the site and keeps you under the radar.

Using proxies helps distribute your requests. Websites often track IP addresses to identify automated behavior. By rotating through different proxy servers, your requests appear to come from various locations, making your list crawling look more natural and less concentrated.

Mimicking human behavior makes all the difference. Real people don’t browse websites like robots. They vary their browsing patterns, use different browsers, and don’t click at perfectly timed intervals. Good crawling tools can rotate user agents (which identify your browser), randomize request timing, and vary other technical details to appear more human-like.

The golden rule is simple: be respectful of the website’s resources. If you’re crawling during off-peak hours and keeping your request speed reasonable, most websites won’t even notice your activity. It’s about finding that sweet spot where you get the data you need without causing any strain on their servers.

The goal isn’t to outsmart website security – it’s to collect data responsibly while being a good digital citizen.

Conclusion

List crawling has emerged as a game-changing tool for real estate professionals who want to stay ahead in today’s competitive market. Throughout our journey together, we’ve finded how this powerful technique transforms the way we collect and use data.

The benefits speak for themselves. We’re talking about saving countless hours of tedious copy-paste work, eliminating human errors that can cost us deals, and gaining access to real-time market insights that keep us one step ahead of the competition. When you can automatically gather property data, track pricing trends, and monitor competitor strategies, you’re operating with a significant advantage.

We’ve explored how list crawling revolutionizes real estate strategy in practical ways. Whether you’re analyzing investment properties by aggregating listings from multiple sources, identifying undervalued opportunities through data patterns, or boosting your website’s SEO by understanding competitor keywords, the applications are endless. The ability to make data-driven decisions rather than relying on gut feelings alone is what separates successful professionals from the rest.

The tools available today make this technology accessible to everyone. From no-code solutions that let you point and click your way to valuable data, to powerful developer frameworks for those wanting maximum customization, there’s an option for every skill level and budget. You don’t need to be a programmer to get started, but the door is always open to grow your technical skills as your needs evolve.

Perhaps most importantly, we’ve emphasized the critical importance of ethical practices. Respecting robots.txt files, following terms of service, and protecting data privacy aren’t just legal requirements – they’re the foundation of sustainable, responsible data collection that builds trust and long-term success.

Looking ahead, the future of data in real estate is incredibly exciting. AI-driven crawlers are already on the horizon, promising even more sophisticated analysis and automation capabilities. As information continues to be the currency of success in our industry, mastering list crawling positions you at the forefront of these innovations.

At Your Guide to Real Estate, we believe in empowering you with the knowledge and tools that make a real difference in your career. List crawling represents more than just a technical skill – it’s a pathway to open uping new opportunities and streamlining your operations in ways that seemed impossible just a few years ago.

The real estate landscape continues to evolve, and those who accept data-driven approaches will find themselves with the clearest path to success. Start small, stay ethical, and watch how list crawling transforms your approach to market research, lead generation, and strategic planning.

Ready to take the next step in your career? Learn how to choose the right real estate brokerage.